| Title |

Coal/Water Slurries: Fuel Preparation Effects on Atomization and Combustion |

| Creator |

Holve, D. J.; Meyer, P. L. |

| Publisher |

University of Utah |

| Date |

1984 |

| Spatial Coverage |

presented at Tulsa, Oklahoma |

| Abstract |

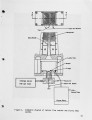

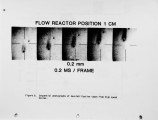

A laminar flow reactor has been developed which gives a uniform temperature and oxidizing medium in which to study the initial stages of combustion of various condensed phase fuels, in particular, coal/water slurries. In conjunction with the flow reactor, a new in situ particle counter has been used to determine the atomization characteristics of various slurry mixtures and to monitor the change in absolute size distribution with increased residence time in the reactor. Initial size distributions and subsequent evolution of these distributions vary markedly among the various fuel preparations, emphasizing the importance of the physical properties of the slurry mixture in determining combustion characteristics. The effects of fuel type on atomization, char burnout, and flyash formation are quantitatively described in the paper. Size distribution measurements of five different coal/water slurry mixtures are compared with with each other and with dry pulverized coal. High speed movies of reacting coal/water slurries have also been obtained and provide a qualitative validation of the detailed size distributions. In addition, the movies show formation of a devolatilization cloud surrounding the slurry particles, similar to previous results for pulverized coal particle combustion. |

| Type |

Text |

| Format |

application/pdf |

| Language |

eng |

| Rights |

This material may be protected by copyright. Permission required for use in any form. For further information please contact the American Flame Research Committee. |

| Conversion Specifications |

Original scanned with Canon EOS-1Ds Mark II, 16.7 megapixel digital camera and saved as 400 ppi uncompressed TIFF, 16 bit depth. |

| Scanning Technician |

Cliodhna Davis |

| ARK |

ark:/87278/s67w6fqq |

| Setname |

uu_afrc |

| ID |

1994 |

| Reference URL |

https://collections.lib.utah.edu/ark:/87278/s67w6fqq |